Trump’s Executive Order, FDA National Priority Vouchers, and the New Strategic Framework for Psilocybin, Methylone, and Noribogaine

The FDA’s acceleration of psychedelic and psychedelic-adjacent therapies should not be mistaken for therapeutic validation or imminent broad commercialization. The National Priority Voucher may compress the regulatory review window, but it does not approve products, lower evidentiary standards, or resolve the clinical burdens surrounding safety, durability, abuse potential, trial integrity, and controlled delivery.

The selected programs — psilocybin for depression, methylone for PTSD, and noribogaine for alcohol use disorder — are moving under one policy framework, but they are not one pharmacological class. Each carries a different safety profile, regulatory failure mode, and market-access constraint.

The deeper institutional issue is timing. Regulatory acceleration may create strategic optionality for sponsors and investors, but it also exposes weak evidence faster. In a conflict-heavy geopolitical cycle, the veteran-health framing adds another layer: these therapies may become relevant not only to psychiatric innovation, but to broader readiness for trauma, addiction, and post-conflict mental-health burden.

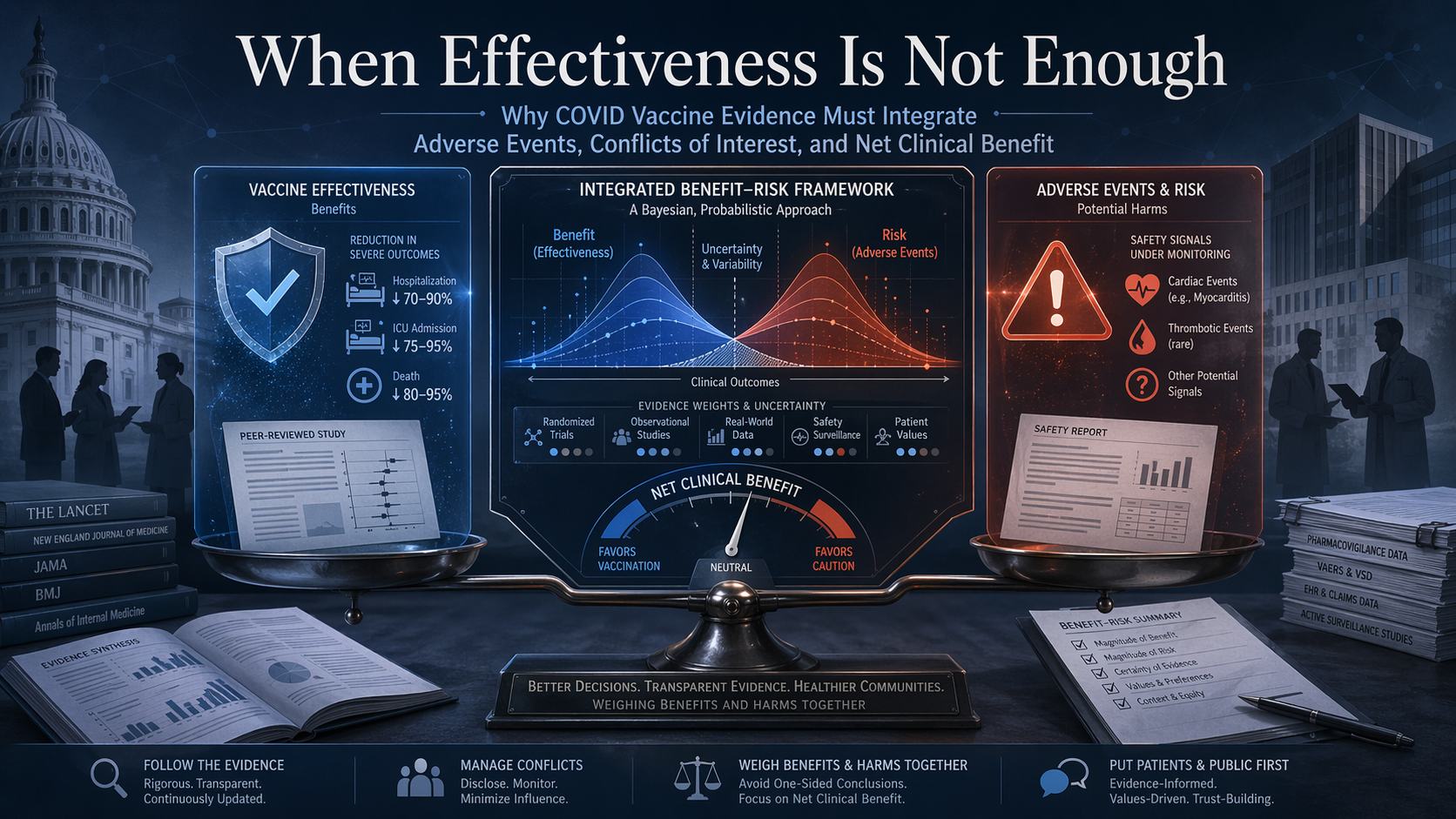

HHS’s Blocked CDC Report and the Limits of Effectiveness-Only Evidence

The main issue is no longer whether one blocked CDC report had methodological weaknesses. The deeper issue is that COVID vaccine evidence has often been communicated through a structurally incomplete model: effectiveness is measured in one channel, while adverse events and net benefit–risk balance remain outside the main frame. Two influential studies helped shape the public narrative of protection, but neither was designed to answer the full recommendation question. For preventive products, the real standard cannot be protection alone. It has to be whether expected benefit outweighs expected harm in the population being asked to receive the product.

Workshop on the Use of Bayesian Statistics in Clinical Development

The EMA’s Bayesian workshop makes one point increasingly clear: modern clinical development is searching for ways to preserve decision-making under conditions of evidence scarcity. Borrowing is one response, but it carries a structural risk—when external evidence is not truly transferable, efficiency can become distortion. The deeper regulatory question is therefore not only how to import evidence, but how to preserve its continuity, interpretability, and safety meaning across the full lifecycle.

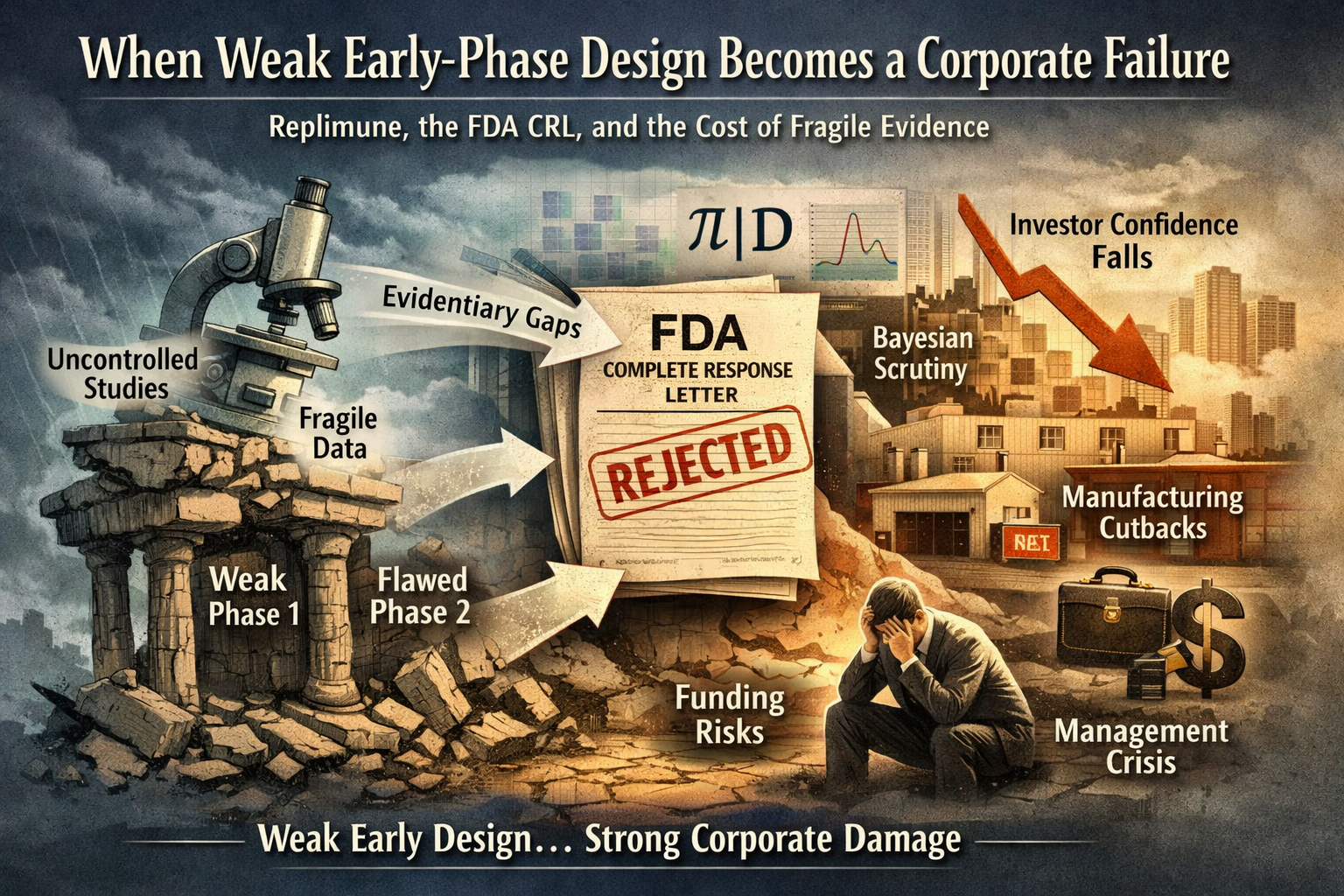

Replimune’s CRL and the Cost of Weak Early-Phase Design

Replimune’s FDA Complete Response Letter should not be read as a routine regulatory delay. It exposed a deeper structural problem: when early-phase studies are built to generate signal rather than establish clean causal evidence, the weakness does not remain confined to the clinic. It compounds across the program, undermining approval, compressing investor confidence, and destabilizing the company’s operating model. Under a more explicit Bayesian FDA, that vulnerability becomes even harder to manage, because ambiguous contribution of effect, weak comparators, and fragile inferential logic are less likely to be absorbed and more likely to be challenged directly.

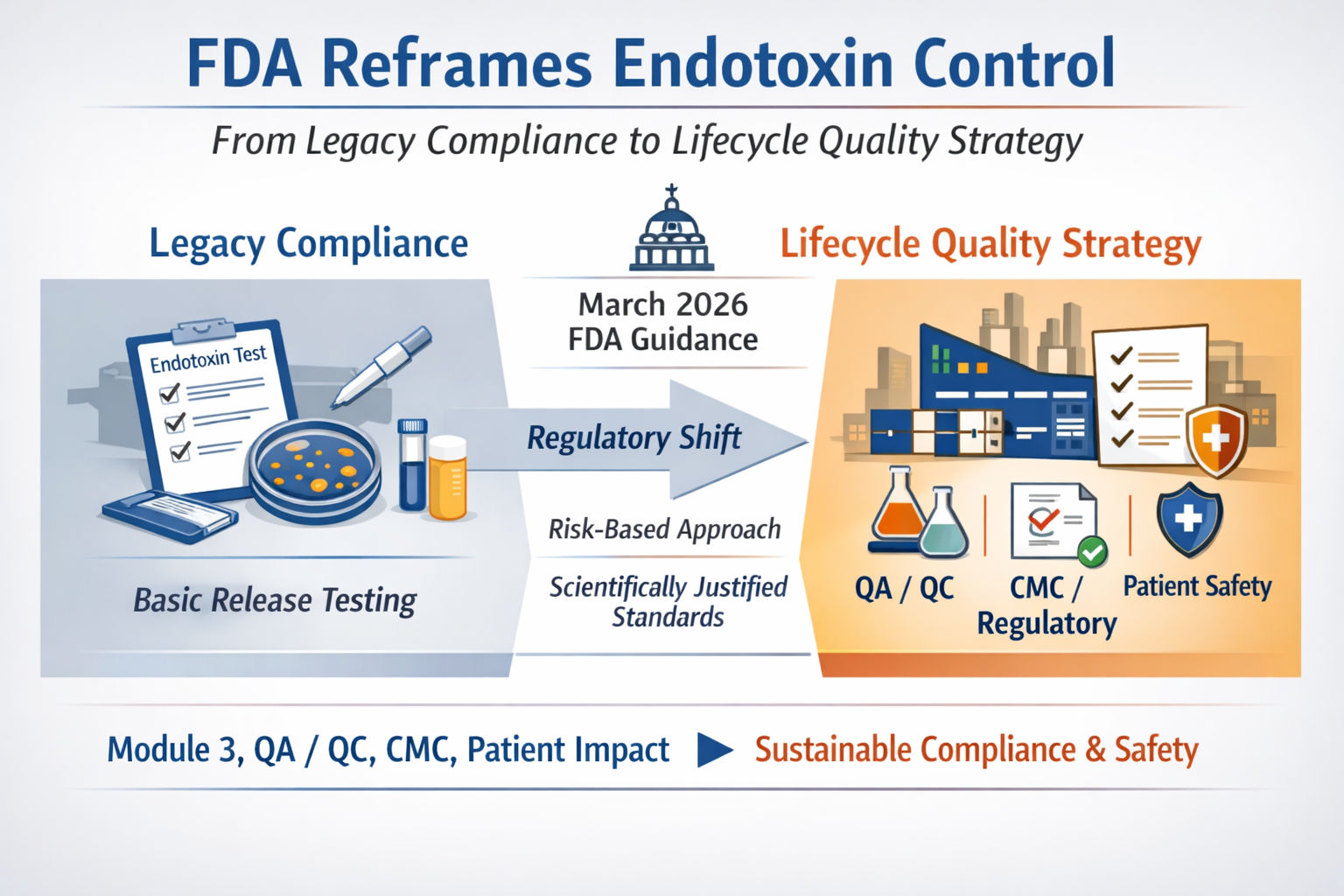

FDA’s New Endotoxins Guidance and the Shift from Release Testing to Lifecycle Quality Control

The FDA’s March 2026 endotoxins guidance should not be read as a narrow laboratory update. It signals a broader shift in regulatory expectations, moving endotoxin control from a release-test routine to a product-specific lifecycle quality strategy tied directly to Module 3, QA/QC, CMC, and patient safety. For firms, investors, and executive teams, the real issue is no longer whether a compliant procedure exists, but whether the company can defend its quality logic as a scientifically integrated system under a more demanding FDA standard.

NEJM’s HbF Promoter-Editing Papers, the Beam–Editas Split, and the Real Constraint on Gene-Edited Sickle Cell Therapy

Two recent New England Journal of Medicine papers have reinforced the therapeutic promise of HbF reactivation in sickle cell disease, but they also exposed a more important structural divide. The central question is no longer whether promoter editing can work. It is whether genomic elegance can survive the combined pressure of long-term safety uncertainty, multimillion-dollar treatment economics, and post-first-mover competition. Beam Therapeutics continues to advance its adenine base-editing strategy as a differentiated platform. Editas Medicine, despite publication-grade data, chose to discontinue reni-cel. The split reveals a harder truth now facing the field: in advanced gene editing, scientific validation alone is no longer enough.

The Logic Flow Behind FDA’s 2026 Shift: From Plausible Mechanism to NAM Validation

FDA’s March 18 NAM draft did not emerge in isolation. Read alongside the February Plausible Mechanism guidance, it points to a broader regulatory direction: away from rigid evidentiary defaults and toward more context-specific, human-relevant, and fit-for-purpose validation. BBIU’s earlier institutional reading had already identified that shift. The deeper implication is not weaker safety, but a higher burden of upstream verification—especially where preventable risk can be forced before irreversible patient exposure.

FDA AEMS and the Next Phase of Drug Safety Oversight

FDA’s new AEMS platform is not just a reporting upgrade. It is a meaningful shift toward integrated, real-time post-market safety surveillance across FDA-regulated products. But stronger visibility does not automatically produce stronger interpretability. While AEMS improves signal detection, transparency, and regulatory coordination, FDA is explicit that the system does not establish causality on its own. This analysis argues that the deeper regulatory frontier is not surveillance alone, but lifecycle safety continuity: a framework that links mechanistic risk prediction, clinical follow-up quality, unresolved-case review, and post-marketing therapeutic context into one auditable architecture.

Remibrutinib (LOU064): Structural Classification of a Multi-Indication Phase III Clinical Program

Remibrutinib (LOU064) is not being developed as a single-indication asset, nor as a speculative expansion play.

Its Phase III and Phase IIIb clinical architecture reveals a deliberate, multi-axis strategy aimed at establishing a long-term, orally administered BTK inhibitor across chronic inflammatory and neuro-immune diseases.

Starting from chronic urticaria as the anchor indication, Novartis has constructed a program that combines pivotal replicates, head-to-head comparator trials, randomized withdrawal designs, pediatric extensions, and event-driven studies in progressive disease. The result is a development profile focused not only on efficacy, but on durability, positioning, and lifecycle control.

This document provides a structured classification of the full Phase III remibrutinib program, mapping indications, trial design logic, and strategic intent. It also highlights the constraints that define the ceiling of expansion: chronic exposure, long-term safety, and regulatory scrutiny inherent to covalent BTK inhibition.

What emerges is a controlled expansion strategy—broad in scope, but tightly bounded by safety architecture—suggesting that remibrutinib is being positioned as a foundational immunomodulatory platform rather than a single commercial bet.

Why Proliferation-Revealed Risk Must Be Forced Before Patient Exposure

This article examines why, under the FDA’s Plausible Mechanism Framework, forcing proliferation-dependent risk in the laboratory is not optional but structurally necessary. It argues that allowing such risks to surface for the first time in patients represents a preventable transfer of uncertainty—one that is scientifically avoidable and ethically indefensible for irreversible therapies.

Post-Resolution Antithrombotic Therapy After AF Ablation:Why Event-Based Truth Fails Under Bayesian Regulatory Transition

Post–atrial fibrillation ablation represents a structural state change, not a continuation of untreated disease. Yet regulatory and trial architectures continue to operate as if the original causal driver remained intact.

This analysis demonstrates how event-based truth frameworks—designed for high-incidence, untreated pathology—become epistemically fragile in post-resolution states. As thromboembolic events attenuate and bleeding events persist, trials anchored to single-axis primary endpoints can achieve formal statistical success while failing to resolve net clinical value.

Under Bayesian regulatory transition, truth is no longer established by isolated efficacy signals, but by posterior belief over total consequence. When benefit becomes rare and harm recurrent, maintaining legacy endpoint hierarchies produces stability-through-inertia: guidelines remain intact, trials remain neutral, yet epistemic degradation accumulates silently.

This work argues that the unresolved tension in post-ablation antithrombotic therapy is not pharmacologic, but architectural. Resolving it requires a shift from event dominance to net-belief evaluation—where benefit, harm, and execution integrity are treated as co-equal truth carriers in regulatory inference.

Immunologic Ambiguity and Transparency Failure in First-in-Class In Vivo CRISPR Therapy

A fatal adverse event in an irreversible gene-editing trial is not merely a safety incident. It is a system-level test of regulatory memory.

In this case, an acute liver injury treated with corticosteroids—followed by death and an FDA clinical hold—was administratively processed without public causal disclosure. The regulatory system acted, but the evidentiary substrate remained confined to non-public channels. As a result, clinical development continued under a narrowed population while the underlying immunologic, procedural, and contextual uncertainties remained unresolved.

For irreversible CRISPR therapies, this model is structurally insufficient. When severe adverse events are adjudicated without transparency, safety becomes narrative-dependent rather than evidence-anchored. Without public reconstruction of what happened, why it was suspected, and how accountability was assigned, neither participants nor future patients can benefit from the learning such events are meant to generate.

In a Bayesian regulatory era, belief without memory is not safety. It is deferred risk.

Structural Reconfiguration of Hypertriglyceridemia Management Through Upstream Lipid Traffic Control

This analysis examines severe hypertriglyceridemia not as a problem of insufficient lipid lowering, but as a structural failure of historical therapeutic architectures to control event-dominant risk without displacing systemic stress.

For decades, pharmacologic strategies succeeded in reducing triglyceride values numerically while failing to stabilize the underlying lipid traffic system at saturation thresholds where acute pancreatitis emerges. The apparent availability of therapeutic options masked a deeper architectural misalignment: downstream modulation without upstream regulatory control.

The introduction of apoC-III–targeted intervention reconfigures this system by restoring control over lipid traffic flow and suppressing pancreatitis as the dominant failure mode. However, this shift does not eliminate systemic stress; it relocates it toward hepatic metabolic load and hematologic accommodation, transforming episodic catastrophic failure into chronic surveillance-dependent stability.

The resulting configuration appears stable at the surface level, yet reveals a deferred structural tension between event suppression and long-term metabolic reconciliation—highlighting a system that has changed its failure mode rather than resolved its constraints.

Regulatory Accountability Collapse Under Event-Based Truth

The FDA’s recall framework still treats quality failure as a sequence of isolated events rather than as an accumulating signal of manufacturer reliability. The Glenmark case exposes this structural blind spot. A recall can exist administratively without becoming epistemically relevant, while more than a decade of recurrent quality deviations remains unintegrated into regulatory truth.

This is not an enforcement failure. It is a memory failure.

The legacy p-value and gate-validation paradigm was never designed to price recurrence, provenance opacity, or long-run execution drift. Bayesian regulation creates the technical capacity to correct this—but only if accountability is encoded by design. Without historical performance as a formal prior, Bayesian tools risk accelerating belief updates without increasing truth.

In a belief-governed regulatory era, accountability cannot remain episodic. Reliability must be inferred over time, across products, sites, and people—or regulatory stability becomes a surface illusion sustained by deferred accumulation of risk.

FDA - Depth as Regulatory Truth

The FDA’s draft guidance on Minimal Residual Disease (MRD) and Complete Response (CR) in multiple myeloma marks a decisive shift in how regulatory truth is constructed. This is not an oncology-specific refinement, but a structural reallocation of epistemic burden. As overall response rates saturate and lose discriminative power, approval credibility migrates toward depth-based biological resolution governed by assay validity, data integrity, and methodological coherence.

Under this framework, accelerated approval is no longer constrained by trial size or population-level separability, but by the precision and governance of measurement systems. MRD does not relax regulatory standards; it relocates risk. The system preserves speed by embedding conditionality into assays rather than statistics, extending epistemic exposure across the product lifecycle. In this regime, regulatory advantage accrues not to those who generate deeper responses fastest, but to those who can sustain measurement coherence over time.

New FDA epistemic approach - From Living Products to Living Belief

In 2026, the FDA ceased to regulate drugs as finished objects.

Through the convergence of CGT lifecycle manufacturing control and Bayesian regulatory inference, it began to regulate them as living epistemic systems.

Cell and gene therapies no longer achieve truth through validation events. Their identity is sustained through continuous manufacturing governance, global data streams, and probabilistic belief updating. At the same time, Bayesian methodology dissolves the finality of Phase III, replacing hypothesis rejection with a permanently evolving posterior probability of therapeutic reality.

These two shifts form a single regulatory architecture: a unified epistemic control system in which manufacturing stability, clinical evidence, and real-world outcomes collapse into one continuously updated regulatory belief.

What appears as flexibility is in fact a structural concentration of power.

Regulatory authority now resides in those who control data, registries, and biological continuity across time.

In this regime, molecules are not approved.

They are continuously believed.

Cardiovascular Disease: LDL Lower Is Better—At What Structural Cost?

The expansion of PCSK9 inhibition into primary cardiovascular prevention marks a critical inflection point in modern lipid management. While statistical efficacy has been demonstrated, the structural question remains unresolved: whether aggressive LDL suppression translates into proportional system-level value.

This analysis demonstrates that the observed 1.8% absolute risk reduction comes at the cost of escalating pharmacological mass, subcutaneous delivery burden, and unpriced non-cardiovascular uncertainty—particularly in older, polymedicated populations where interaction density, not single-drug toxicity, defines real-world risk.

Under ODP–DFP scrutiny, PCSK9 inhibition emerges not as a failure of innovation, but as a signal of saturation: a state where biochemical precision outpaces clinical leverage, and where guideline legitimacy no longer guarantees public health efficiency.

This report does not dispute efficacy.

It audits proportionality.

Oralization at Scale and Deferred Risk Disclosure

The rapid expansion of GLP-1 receptor agonists is no longer an efficacy story.

It is an exposure-architecture story.

Between mid-2025 and early 2026, the GLP-1 system crossed a structural threshold: oralization removed friction, normalized chronic use, and accelerated population-scale exposure faster than regulatory codification could adapt. As a result, low-frequency but high-impact risks—previously buffered by injectable gating, specialist oversight, and limited uptake—have begun to surface as structurally relevant signals rather than isolated anomalies.

The absence of new label warnings on irreversible visual impairment, despite emerging observational data and pharmacovigilance reports, does not negate risk. It reveals a temporal asymmetry between evidentiary horizons and real-world deployment. In such systems, regulatory silence functions less as reassurance and more as a lagging indicator.

This analysis demonstrates how early structural signals—dismissed when incidence appears small—become legible only once scale validates them. The core risk is not molecular novelty, but the geometry of exposure itself.

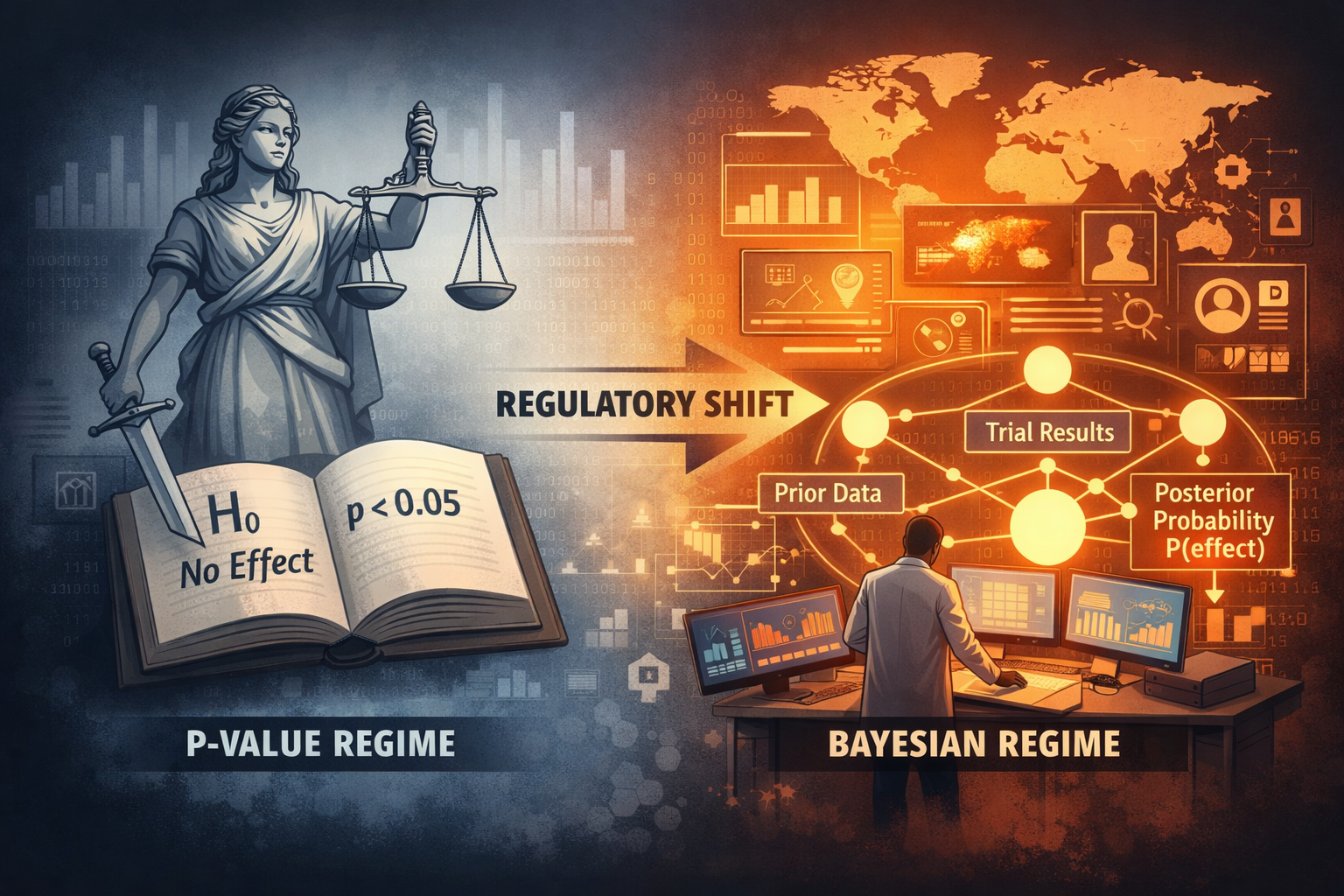

Regulatory Truth Rewritten — The FDA’s Bayesian Turn as Structural Reallocation of Epistemic Power

From Hypothesis Rejection to Belief Governance under ODP–DFP Stress

For more than half a century, FDA approval has not meant that a drug was true.

It meant that disbelief could no longer be sustained.

Under the frequentist regime, regulators never asserted that a therapy worked; they merely rejected the hypothesis that it did not. This preserved legal defensibility—but it also displaced epistemic responsibility. Clinical development proceeded inside a statistical fiction where “passing” replaced “being true.”

The FDA’s January 2026 draft guidance on Bayesian methodology ends that fiction.

By authorizing Bayesian primary inference in pivotal trials, the Agency has redefined regulatory truth as a quantified belief state—a posterior probability built from biology, historical trials, external datasets, and real-world evidence. Approval is no longer an event. It is a continuously updated belief about clinical reality.

This shift is not philosophical. It is structural.

Small populations, globalized execution, China-centered trial ecosystems, and exploding real-world data volumes have made hypothesis-based validation obsolete. In response, the FDA has converted its regulatory engine from event-based adjudication to belief governance.

Whoever controls data now controls truth.

And in a Bayesian FDA, truth is no longer declared—it is computed.